Detailed DescriptionIn this section the system design is explained in details. As a brief summary, the system is composed of a wearable that connects with AWS and MQTT to both train the gesture recognition model and publish predictions to connected IoT devices. These IoT devices can do anything as long as it is subscribed to the MQTT server.

|

Hardware Design & Data Collection (I2C)

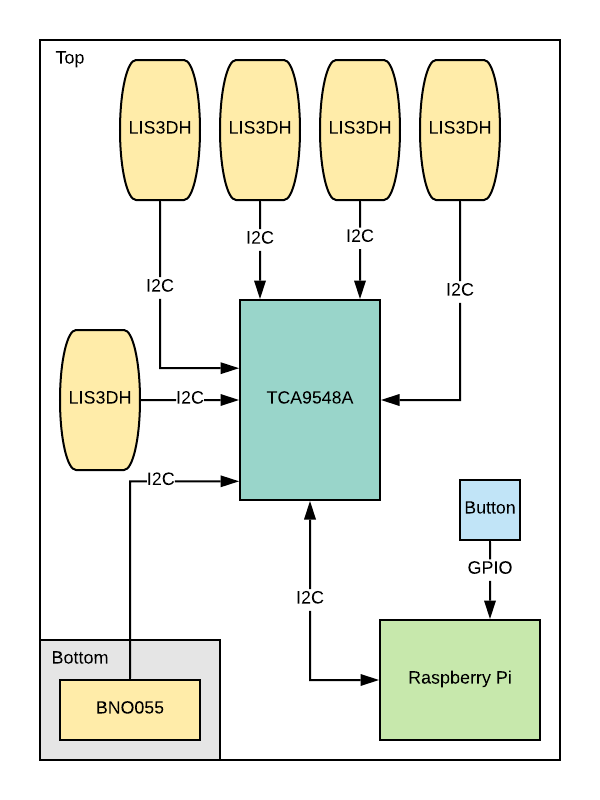

First, both training and prediction must be able to read the data from the wearable during a gesture. It does this by reading all the features through I2C from all the sensors every 100ms for 10 readings total and concatenating these values into a single vector of length (# features * 10 readings). After some preprocessing checks, this vector is appended (first column as per SageMaker conditions) by the gesture label if it is in training mode or simply sent to the AWS SageMaker endpoint if in prediction mode.

The hardware design diagram is shown below.

The hardware design diagram is shown below.

Training Mode (S3, SageMaker)

If the system is in training mode, the full training data is sent as a .csv file to an AWS S3 bucket for storage. Then an AWS SageMaker Jupyter Notebook instance is run on their servers using the S3 bucket’s training data file and generates/updates a SageMaker endpoint. The training algorithm used in this project is SageMaker’s adaptation of XGBoost for multi-classification. For the purposes of this project, feature engineering is not utilized as this would limit the number of unique gestures possible. This endpoint holds what AWS calls ‘model artifacts’ and essentially allows for others to obtain real-time predictions. An endpoint (though not explored in this project) can also be invoked to update the training model with new predictions or hyper-parameter tuning for better results. This ability for remote updates on the training model is an advantage over models that reside on the device as it saves computational power in exchange for monetary capital.

Prediction Mode (SageMaker, MQTT)

Once the system is in prediction mode, the captured data is sent to the SageMaker endpoint through an invocation by the Raspberry Pi. The endpoint responds with the predicted label and this label is immediately published to an MQTT topic by the Raspberry Pi.

The Raspberry Pi is configured with the python package ‘mosquitto’ to immediately start a MQTT broker/server upon start up. The IP address of this broker (the Raspberry Pi) is shared with other IoT devices for MQTT communications. Conceptually, the MQTT Broker does not need to reside on the Raspberry Pi as long as the IoT devices to control are able to connect to the same broker. In industry, the MQTT broker can be supplied by AWS IoT Core and therefore the connections can theoretically extend beyond the range of a LAN.

Any IoT devices can be controlled by this system as they only need to be connected to the same MQTT broker. In this project, an Intel Edison is used as the topic subscriber along with hosting a Django-powered web page to hold the results of past predictions. Additionally, a laptop is also subscribed to the MQTT topic and its keyboard is controlled. Upon reception of the prediction from the subscribed MQTT topic, the Intel Edison will perform a specific task (ie: LED on, buzzer beeps, etc) and updates the web page with the expected action, gesture prediction, raw label, and sensor readings (if prompted to). The laptop will perform a certain keyboard action (ie: press ‘down’ key to move to next slide).

The Raspberry Pi is configured with the python package ‘mosquitto’ to immediately start a MQTT broker/server upon start up. The IP address of this broker (the Raspberry Pi) is shared with other IoT devices for MQTT communications. Conceptually, the MQTT Broker does not need to reside on the Raspberry Pi as long as the IoT devices to control are able to connect to the same broker. In industry, the MQTT broker can be supplied by AWS IoT Core and therefore the connections can theoretically extend beyond the range of a LAN.

Any IoT devices can be controlled by this system as they only need to be connected to the same MQTT broker. In this project, an Intel Edison is used as the topic subscriber along with hosting a Django-powered web page to hold the results of past predictions. Additionally, a laptop is also subscribed to the MQTT topic and its keyboard is controlled. Upon reception of the prediction from the subscribed MQTT topic, the Intel Edison will perform a specific task (ie: LED on, buzzer beeps, etc) and updates the web page with the expected action, gesture prediction, raw label, and sensor readings (if prompted to). The laptop will perform a certain keyboard action (ie: press ‘down’ key to move to next slide).